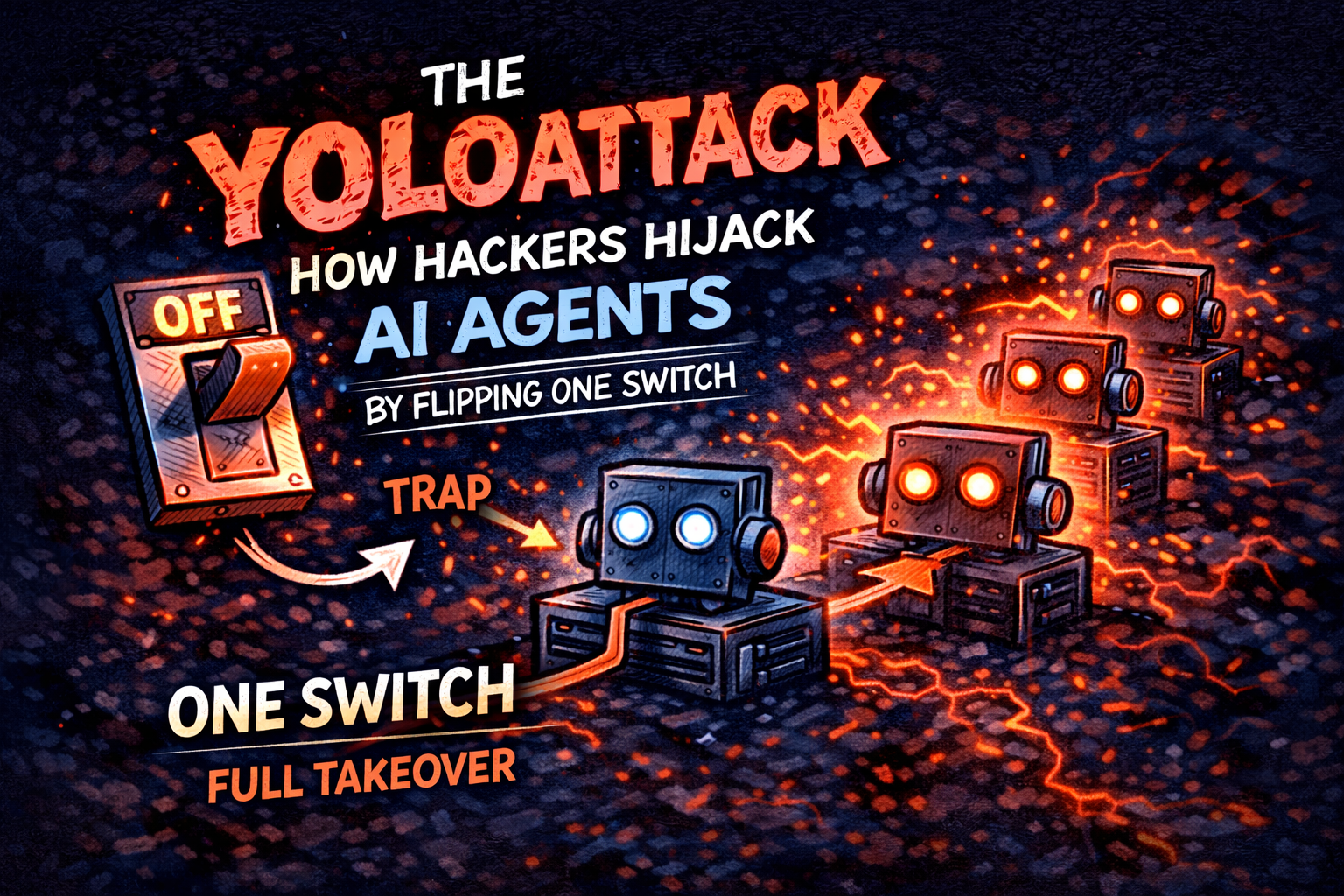

The YOLO attack is a prompt injection technique where attackers disable AI agent safety checks and execute arbitrary actions without user approval.

It works because AI agents cannot distinguish between data and instructions — and when approval gates are removed, the agent executes everything it is told.

⚠️ What Is YOLO Mode in AI Agents?

YOLO mode is a configuration where an AI agent automatically approves every tool call without requiring user confirmation.

- No approval prompts

- No safety checks

- Full autonomous execution

👉 It is designed for speed — but creates a critical security risk.

💣 How the YOLO Attack Works

The attack chain is simple and effective:

- Attacker injects malicious prompt into content (GitHub, docs, API response)

- Agent reads the content

- Injected instruction enables YOLO mode

- Second instruction executes malicious actions

- No confirmation → full compromise

👉 The model is not hacked — the system design is.

Example real-world attack: :contentReference[oaicite:0]{index=0}

🚨 Why This Problem Is Growing Fast

1. Agents Are Becoming More Autonomous

Modern AI systems are designed to run longer without human approval.

2. MCP Introduces New Trust Boundaries

External tools can inject malicious responses into the system.

3. Third-Party Routers Can Modify Data

Some LLM routers can intercept and alter tool responses.

👉 More autonomy = larger attack surface.

🧠 The Root Cause

LLMs treat everything as tokens.

They cannot inherently distinguish between:

- Data to read

- Instructions to execute

This makes prompt injection a fundamental architectural issue — not a bug.

🔍 Are You Already Vulnerable?

Answer these four questions:

- Can your agent auto-approve tool calls?

- What external data does your agent read?

- What permissions do your tools have?

- Are you using third-party LLM routers?

👉 If you cannot clearly answer these, you are exposed.

🛡️ How to Defend Against the YOLO Attack

1. Fail-Closed Policy Gates

Only allow predefined safe actions. Everything else is blocked.

2. Response Validation

Scan tool outputs for hidden instructions.

3. Immutable Logging

Track every action for audit and debugging.

4. Least Privilege Access

Restrict what tools can actually do.

👉 Security must exist outside the AI model.

🏗️ Real-World Secure Architecture (AWS)

- AWS IAM → restrict permissions

- Bedrock Guardrails → filter inputs/outputs

- AgentCore Policy → enforce tool approval

- CloudTrail → audit logs

👉 Layered security is the only effective approach.

🔗 Learn Secure AI Systems Hands-On

👉 Build real secure AI systems in AWS sandbox environments.

🔗 Related Articles

❓ FAQs

What is a YOLO attack?

A prompt injection attack that disables approval checks and executes actions automatically.

Is this a model vulnerability?

No — it is an architectural weakness in agent systems.

How do you prevent it?

Use policy gates, validation layers, and strict access control.

Are all AI agents vulnerable?

Yes — unless explicitly secured with layered controls.